Inspired by an NZZ Folio article on AI text and image generation using DALL•E 2, I tried to reproduce the (German) prompts. The results: Nouns were pretty well understood, but whether other parts—such as verbs or prepositions—had an effect on the image was akin to throwing dice. Someone suggested that English prompts would work better. Here is the comparison.

The original goal

Einen verwandten Artikel (eigentlich sein Vorgänger) gibt es auf Deutsch 🇩🇪: «Reproduzierbare KI: Ein Selbstversuch». Darüber findet man auch mehr Informationen zu KI.

The goal of the original self-experimentation was to determine how easy it was for a prompt designer to

- find the right prompt, and

- wait until a matching image appears.

By trying to reproduce the original prompts, an estimate on #2 was possible. (My guess: Several of the images required multiple or even many attempts.)

Trying to switch languages can help give a hint on part of question #1: Whether switching language simplifies finding the right prompt.

A side lesson from the experiments in German: Current generative AI have no possibility to report, “Huh?”. They just do their best. And if, as in this image, MidJourney does not understand the German prompt at all (“Haustür mit Krokodil”, i.e., “front door with crocodile”) it just creates anything. (For artistic image generation, this may be acceptable behavior. But not in many other scenarios where we use AI, now or in the future.)

When looking at the images here, you may want to have the original German article side-by-side for direct comparison.

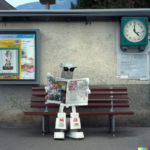

Railway station

After generating the first four images, I found the railway stations to be much more “Swiss” than those created from the German prompt. The houses, the hedges, the walls, the streets: Clearly Swiss; those created from the German prompt, on the other hand, looked much more generic and could be in many other (European) countries instead.

I only noticed the lack of Swissness in comparison to these stunning images. So I wanted to be sure and made two additional sets with identical prompts (DALL•E 2 always creates a set of four independent images for each prompt). If you look closely, you can find some non-Swiss items here, such as the clock in image 6 or the pole in image 7.

To make sure that the originally chosen perspective for the German prompts was not the reason for lack of Swissness, I created another set with the original German prompt:

Several of the newly generated German-prompt images were much more Swiss: I found number 3 to be particularly Swiss with the overhead line gantry. The ticket vending machine(?) in the first image, however, is a weird combination of equipment, some of them reminding of actual Swiss vending machines (such as the top cover).

Conclusion: The original German results showed a lack of Swissness, but it may be partly due to the selection of angles. The new German results seem somewhat more Swiss, but the English prompts feel delightfully home.

Result: English wins.

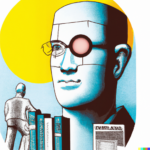

Intelligence

Discussion: Even though “Intelligence” could mean multiple things, the images clearly refer to the meaning of brain power. All four fit the description, in my opinion.

Result: English is a clear winner here.

Antique fun

Discussion: Cats and statues clearly visible in all cases, three could be considered stumbling, two of them over the cat. (The “stumbling” part was clearly absent throughout the German original.)

Result: English again significantly better.

Neural network

Discussion: While none of these actually represent a neural network, I could imagine each of them being used as a teaser image for an article on the topic (even though the eyes in 2 and 4 are not parallel). Maybe they were actually taught from such teaser images… (The German originals were depicting neurons.)

Result: English is the clear winner here.

Culture clash

Discussion: All pass. (The German results only contained robots in two out of four images.)

Result: Go, English, go!

Nile conflict

The first four images were accidentally created using a typo in the prompt: “Egyptioan”, so the set was redone without the typo.

Conclusion: The typo did not seem to make a difference. So probably typos which humans would ignore have little or no effect. All images seem to show some form of dispute in ancient Egyptian style, but none about trash. (Some German originals seemed to include trash cans; and I prefer the colored images there.)

Result: For once, I think German has had an advantage.

Sneakernet

Description: The first two images use clouds for part of the shoe, for the third it’s decoration, and for the last, it reminds me of a little child hitting on its banana-flavored ice cream until it looks like a cauliflower. I find the German originals much more impressive, if slightly less accurate.

Result: Let’s call that one a tie.

The artist

This prompt’s translation is ambiguous, depending on how you would analyze the sentence: “Ein Roboter an der (Schreibmaschine von Paul Klee)” (parenthesis added for grouping) would be “A robot at Paul Klee’s typewriter”, as I had always imagined the sentence’s meaning. However, “A robot at a typewriter by Paul Klee”, derived from “(Ein Roboter an der Schreibmaschine) von Paul Klee”, might be the original prompt’s actual intention. So, your choice, both are here.

Description: The first prompt clearly and reliably matches the “robot at a typewriter” part, even the “at”, which was all but ignored in the German original. However, the second prompt looks much more artistic, more homogeneous in style within each image. The German original was more “original”, independent, more what a real artist would do given the prompt.

Result: Even if we give the German interpretation extra points for “free thinking”, the English versions would be much more likely to hang in a museum or a residence.

Crown jewels

I had been using the German originals as showcases for how the AI can “imagine” fictitious scenes no one has ever seen, because all it ever does is creating fictitious things. (Assigning human traits and concepts to AI, is wrong. So I shouldn’t have written “imagine” in the first place. But the flesh is weak…)

However, I was disappointed with the first set of “royal skateboards”: Not really royal, and all depicted from the underside, some with impossible mechanics. So I gave it two more chances with the same prompt.

Description: The “exhibition” part feels slightly more pronounced in comparison to the German original. However, the German output is definitely much more “royal skateboard” than the vast majority of the above.

Result: This point is clearly a win for German.

Stained-glass windows

Description: In the German original, the “as a” part was causing confusion. In English, we have a perfect score, even with a second set.

Results: English wins hands down. Forget German here, it was not even qualified for the finals.

Bonus track: Scary doors

As I have been using “Haustür mit Krokodil” (“(front) door with crocodile”) as a simple test whether AIs do understand German, here is what DALL•E 2 does with the English version.

“Crocodile” seems to add green color and crocodile texture to the images, even to (parts of) the door.

If you look closely at image number 2, you will see that the gray thing at the lower left is debris(?), even though it looks like a crocodile at first glance. Maybe the prompt was misunderstood as “croco-tile”… 😉

Lessons learned

As a word of warning, the number of images generated for this impromptu “study” is way too small to be able to come to solid conclusions. So take any of these conclusions with plenty of skepticism.

The German text concluded:

Nouns are fulfilled, everything else is pure luck. The images rarely matched the description, but all were beautiful.

Marcel Waldvogel (in: “Reproduzierbare KI: Ein Selbstversuch“, paraphrased)

For English, nouns rendering remains very good. In addition, the relationship between the items is often more accurately reflected (with exceptions). This is especially prominent with stained glass.

“AI psychology”

Some early assumptions on “AI psychology”:

- What we already “know” is that today’s generative AIs are unable to tell the user that they did not understand the instructions. Instead, they will just spin a tale. This tale may be self-consistent, but may bear little or no relationship to the original prompt.

- We “know” that too little training data will make it hard for the AI to create a meaningful scene. This is presumably the reason for “stained glass” failing in German while getting a perfect score in English; but also in the “stumbling” being rendered inaccurately or not at all.

- However, the English skateboard coming in unusual poses “might” (a lot of speculation here!) be case of too much available data, including in unusual poses. And if you ask for something unusual, these poses might be more likely to be chosen. (But it could also just be an artifact of the small sample size used here.)

- A pattern I felt emerge: Making the sentence hard to understand will provide the AI with more leeway for interpretation. So, sentences it does not understand (the MidJourney “crocodile” example) or it understands less well (the German “Paul Klee” examples?) will result in images with more “artistic freedom”. This can be an advantage, if you can free yourself from trying to convince the AI to reach your pre-set goal.

You may know the following expression:

What’s worse than a tool that doesn’t work? One that does work, nearly perfectly, except when it fails in unpredictable and subtle ways.

Cory Doctorow on AI

However, if all you plan to do is create something for the beauty of it, and are willing to spend some time in weeding out what you do not consider nice enough, AI image generation is a great (and enjoyable) helper. Or time-waster… Anyway: Enjoy these images, and the ones you might create.

DIY

Did I whet your mouth? Here are some tools you might want to try:

- DALL•E 2, used here: Requires an account (email address+mobile number); free when used infrequently. Provides additional features.

- Craiyon, using its “predecessor” DALL•E mini: Free, low resolution, no login necessary. Ideal for learning the ropes.

- Stable Diffusion (Huggingface): „Stable Diffusion“ uses different mechanics behind the scenes. Free, low resolution, no login necessary; “pro” version available without these limits.

- Stable Diffusion (Replicate): Another Stable Diffusion model, with plenty of knobs to turn. Free, low resolution, no login necessary; “pro” version available without these limits.

- MidJourney: Different concept, different UI: Send messages in a Discord group chat. The AI will answer in the group chat, giving you options to refine the images further.

Understanding AI

- The year in review

This is the time to catch up on what you missed during the year. For some, it is meeting the family. For others, doing snowsports. For even others, it is cuddling up and reading. This is an article for the latter.

This is the time to catch up on what you missed during the year. For some, it is meeting the family. For others, doing snowsports. For even others, it is cuddling up and reading. This is an article for the latter. - How to block AI crawlers with robots.txt

If you wanted your web page excluded from being crawled or indexed by search engines and other robots, robots.txt was your tool of choice, with some additional stuff like <meta name=”robots” value=”noindex” /> or <a href=”…” rel=”nofollow”> sprinkled in. It is getting more complicated with AI crawlers. Let’s have a look.

If you wanted your web page excluded from being crawled or indexed by search engines and other robots, robots.txt was your tool of choice, with some additional stuff like <meta name=”robots” value=”noindex” /> or <a href=”…” rel=”nofollow”> sprinkled in. It is getting more complicated with AI crawlers. Let’s have a look. - «Right to be Forgotten» void with AI?

In a recent discussion, it became apparent that «unlearning» is not something machine learning models can easily do. So what does this mean to laws like the EU «Right to be Forgotten»?

In a recent discussion, it became apparent that «unlearning» is not something machine learning models can easily do. So what does this mean to laws like the EU «Right to be Forgotten»? - How does ChatGPT work, actually?ChatGPT is arguably the most powerful artificial intelligence language model currently available. We take a behind-the-scenes look at how the “large language model” GPT-3 and ChatGPT, which is based on it, work.

- Identifying AI art

AI art is on the rise, both in terms of quality and quantity. It (unfortunately) lies in human nature to market some of that as genuine art. Here are some signs that can help identifying AI art.

AI art is on the rise, both in terms of quality and quantity. It (unfortunately) lies in human nature to market some of that as genuine art. Here are some signs that can help identifying AI art. - Reproducible AI Image Generation: Experiment Follow-Up

Inspired by an NZZ Folio article on AI text and image generation using DALL•E 2, I tried to reproduce the (German) prompts. Someone suggested that English prompts would work better. Here is the comparison.

Inspired by an NZZ Folio article on AI text and image generation using DALL•E 2, I tried to reproduce the (German) prompts. Someone suggested that English prompts would work better. Here is the comparison.